The JMS (Java Message Service) specification is very well-known for those working with messaging platforms but it has been around for quite some time and honestly not getting updates lately which gives a lot of room for enhancements and extensions.

As you can imagine Apache ActiveMQ goes beyond the JMS spec and implement lots of cool things where you can gain more in functionality, performance and scalability.

Starting from the top ConnectionFactory object, ActiveMQ has its own called ActiveMQConnectionFactory, as other JMS providers also create their own specific factories, ActiveMQ enhances the JMS with some settings that you can activate right away with little configuration and definitely see gains in performance. Of course, when you optimize for performance, most of the time, you have the trade-off of more CPU utilization but I think CPU cycles are getting really cheap when comparing with network bandwidth and disk I/O.

Here is a list of things you can use to optimize performance extending the JMS specification with Apache ActiveMQ:

disableTimeStampsByDefault - it sets whether or not you're going to include the Time Stamp in the message header. The story behind this is that when you create a JMS message (actually when you call the send() method) we have to make a call to the system kernel to get the time stamp. This setting could save you a trip to that level and save you some time optimizing performance if the time stamp is not required in your messaging application.

copyMessageOnSend - The Java Message Service specification says that inside the send method we have to make a deep copy of the message object before sending it from the client to the broker. Setting the copyMessageOnSend property to false (default is set to true) will save you a cycle as the client won't make that copy of the message saving local resources.

useCompression - I think the property name here is self explanatory... but the ActiveMQ client will compress the message payload before it goes to the broker. So, the question is why not to use compression all the time and send smaller messages over the wire? Well, in order to compress the message payload you're going to spend CPU time and then the point is CPU vs. I/O. It's a trade-off that you may have to consider... in other words, you'll have to consider spending more CPU to compress the message or more bandwidth sending larger messages. Personally, I believe (and have seem) that if you have the chance to use compression you definitely should.

objectMessageSerializationDefered - Imagine that you're sending an Object Message and you're using an embedded broker for example (client and broker running on the same JVM). What this feature really enables you to do is that you tell the client to delay the object serialization as long as it can until it needs to hit the wire or store the message on disk. Basically, everything inside the same JVM should not be serialized as marshalling and unmarshalling objects can also be expensive.

useAsyncSend - Let's say you're sending NON_PERSISTENT messages to the broker which is equal to non-blocking call with no immediate acknowledgement and in fact it's ver fast but still not reliable messaging.

On the other hand, you can also use PERSISTENT messages which is going to be a blocking call from the client to the broker. So, one of the things you can do is to set useAsyncSend to true (default is false) and then change the behavior of PERSISTENT delivery mode to be asynchronous and no acknowledgement would be expected. In reality, the send() method will return immediately giving you more performance because it reduces the time that the message producer waits for the message to be delivered. Keep in mind that this setting can possibly cause message loss but that's another trade-off that you have to consider when designing your messaging infrastructure.

This is a good approach if you can tolerate some message loss... typically on systems where the most updated information (last message) is always what you care about or when you have a reconciliation process in place to catch those conditions.

useRetroactiveConsumer - This feature allows you to work with limited window of memory topics where you can configure a policy to deliver let's say for example the last 10 messages sent to the topic, the last 5MB of data that went through to that topic or simply the last message sent to the topic... and all of that without the need of durable subscribers :-)

This is actually far more lightweight than using durable subscribers and it's really easy to configure and very easy to use.

Finally, since this is not JMS standard, of course, it's not set by default.

For a complete list of ActiveMQ enhancements to the JMS specification see the ActiveMQ product documentation.

I hope this helps you to push the go-faster button in your messaging infrastructure.

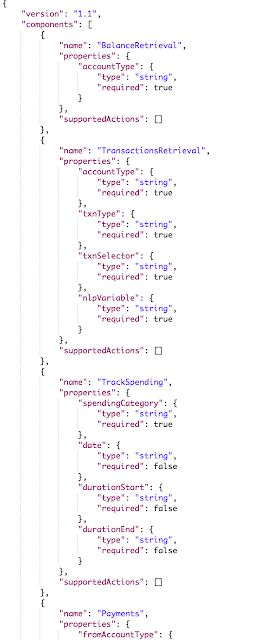

Setting Up Local Environment for Developing Oracle Intelligent Bots Custom Components

Oh the joy of having a local development environment is priceless. For most cloud based solutions the story repeats itself being hard to tr...

-

Web Services are very important components of most (if not all) of the integration projects these days. The Web Services architecture make t...

-

A few days ago I was helping an Apache user getting the ActiveMQ Web Console up and running on ServiceMix 4.4. At first instance, it seems ...

-

As I have said on my previous post, I've been working with several companies with the most diverse use cases and one that really brought...